151Kviews

21 Artificial Intelligence Recreations Of Famous Paintings, Historical Figures, And Cartoons By This Artist

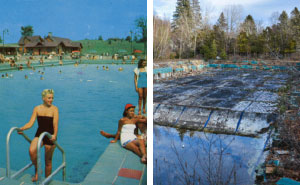

Artificial intelligence has become very easy to access to the public, which has made it very popular. Artists all over are combining their skills and AI to create all kinds of edits. Even most apps have filters that use AI to make you look older, younger, or even a different gender.

This San Francisco-based graphics artist uses this new technology to see how famous paintings and cartoon characters would look if they were realistic, and how artificial intelligence recreates historical figures from paintings or portraits on money bills.

On his website, Nathan says: "I am a technical director, creative technologist, visual effects supervisor, and motion graphics artist with over a decade of experience. Currently exploring the intersection of art and AI."

More info: Instagram | twitter.com | nathanshipley.com

This post may include affiliate links.

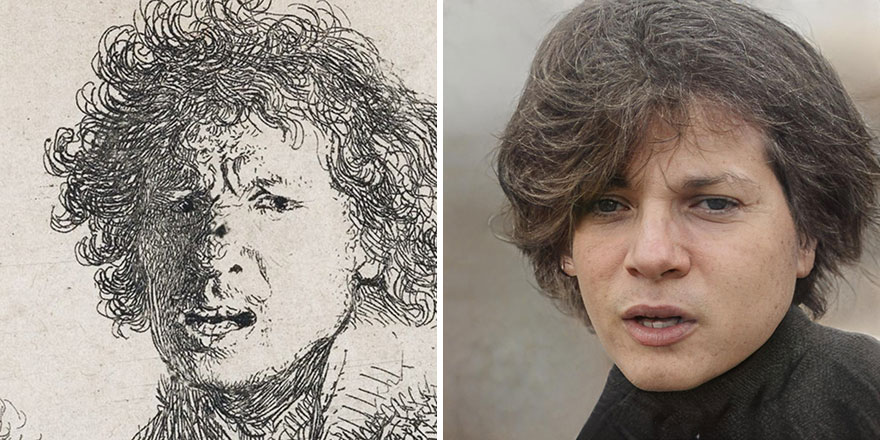

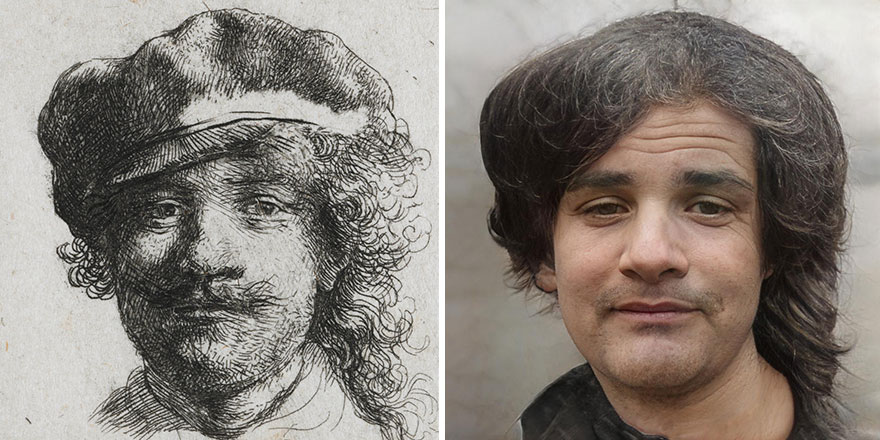

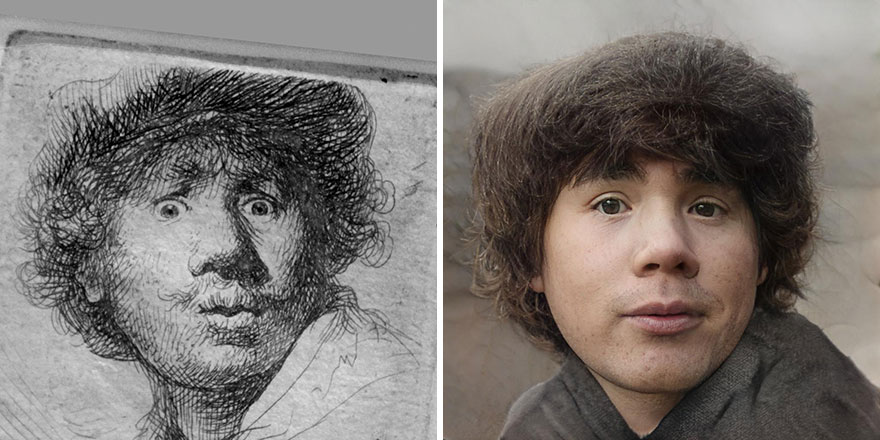

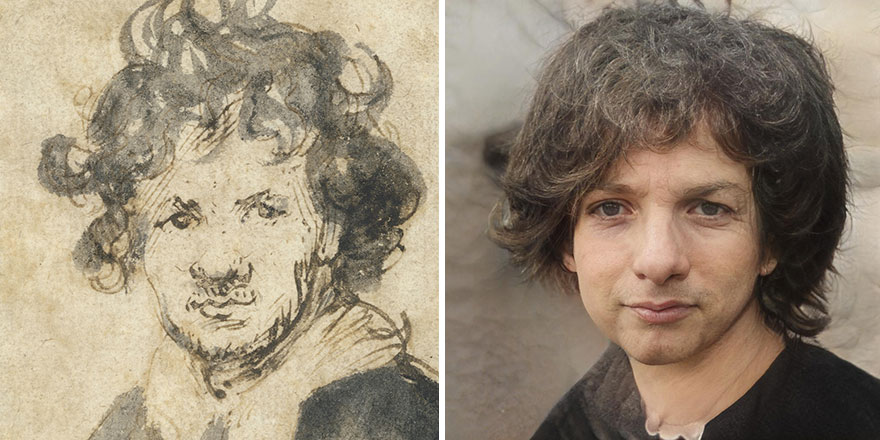

Rembrandt

Nathan Shipley answered some questions for us. He told us what inspired him to create these edits: "On one side, I love to create impossible images and explore new technology. I’ve got a background in animation and visual effects and once I saw what is possible using AI and machine learning tools, I realized there are so many things that could be done with them that would otherwise be impossible. Even some things that may be technically possible with VFX and CG could still be very time-consuming or expensive, whereas AI enables entirely new possibilities.

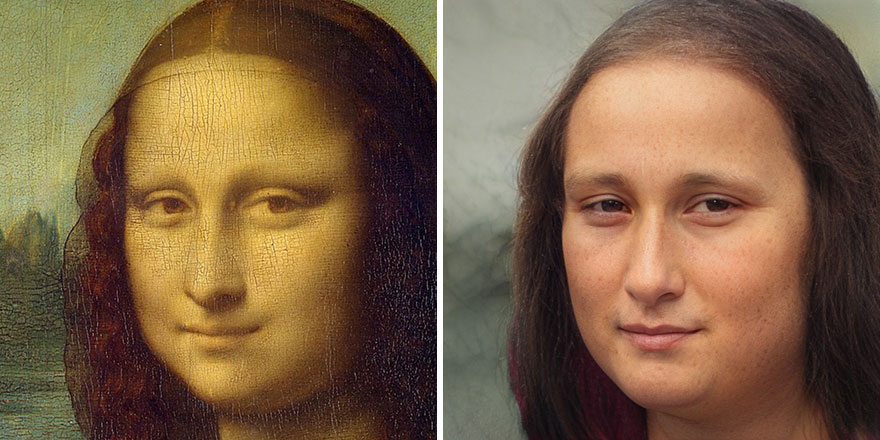

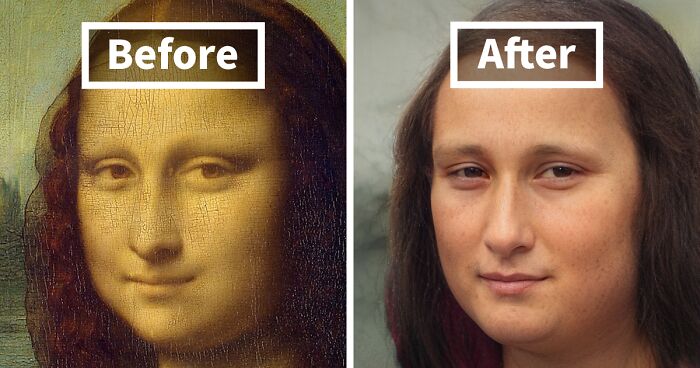

On the other side, it’s fascinating to explore how an AI model built on a particular dataset with a particular framework can 'see' the world and then transform images. The AI 'knows' only what it has already seen and filters the world through this lens. Each little tweak to the dataset, the training parameters, the model, and the input imagery all have the possibility to change the output. This is a space to explore how artificial neural networks interpret the world in a way that can be similar to our own minds. I’m not saying that an image I created is what Mona Lisa actually looked like, but it is how the machine sees her based on this particular arrangement of variables. That, to me, is fascinating.

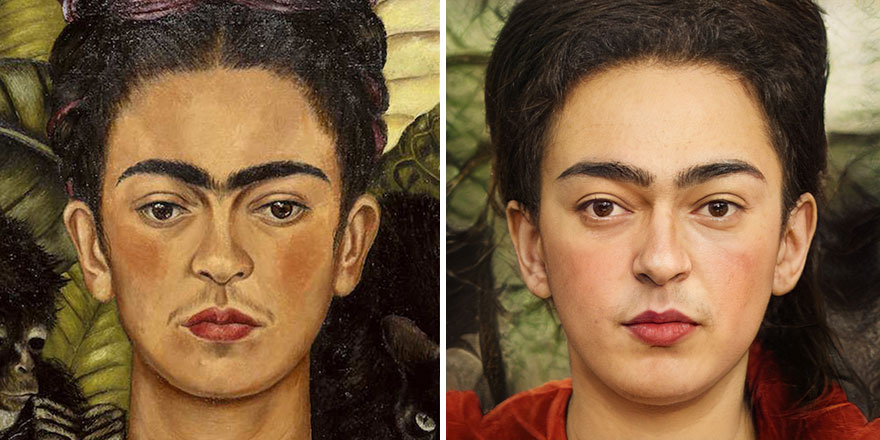

I also want to add that these are definitely just experiments and that there are some pretty obvious limitations of the AI and the datasets that it's trained on. Frida loses her unibrow, Miles' hair gets mangled, Lil Miquela's freckles disappear, hats turn to hair, and Ben Franklin even gets an earring! These are just some examples of how this particular combination of variables recreates a face that comes with a lot of randomness and inconsistencies. Keeping diversity in AI is an area of active research."

Miles Morales From Spider-Man: Into The Spider-Verse

"I have always loved to draw, take photos, and paint. I’ve also always had a computer around since I was in elementary school using a 286 with MS-DOS and no hard drive. The combination of traditional art and technology has been a natural step for me and led to my career in VFX and animation.

My current interest in exploring face manipulation and generative art using AI and machine learning started with a project for the Salvador Dali museum called Dali Lives in 2018. I used early deepfake code to bring Dali back to the museum to talk to visitors about his art. From here, I moved into working with GANs and realized how powerful neural networks can be for image processing and generation! For me, creating art is both an expression of curiosity and an act of exploration through process."

Mona Lisa

The AI completely left out her see-through veil and the middle part in her hair.

Elastigirl From The Incredibles

"My favorite part about creating art is the process of actually making it; the journey and all the exploration that goes with it. I love having a problem and no idea how I’m going to solve it, putting my headphones on, losing track of time, and just trying things until it works.

It’s great to see a finished image, but it’s even more exciting to try new code, use code in ways it wasn’t meant to be, combine different tools together, and create entirely new art through new processes."

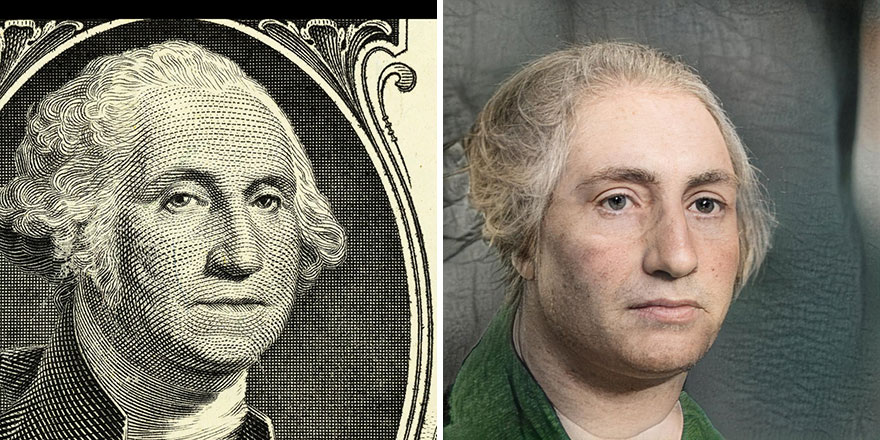

Benjamin Franklin

Hippie? Are you in your 70's? Get off my lawn.

Load More Replies...It looks like he took a picture of some guy that kinda looks like Ben Franklin, but not really.

The eyes and hair are wrong this time. And Ben's more disapproving in the drawing.

This one is not very successful, the AI has created a completely different person. The mouth, the drooping cheeks, even the gaze no longer says "I am dissatisfied with the overall situation" but "This youth of today is in far too much of a hurry".

Miguel From Coco

Nathan has a 4-year-old son and he loves to explore the world with him: "We fish, go to the beach, paint, draw, read, play baseball, and pretend. Otherwise, I love running—it calms me down and focuses my mind."

The artist tells us more about himself: "I’m just a guy from the Midwest of the United States. I grew up in Indiana, went to Indiana University, and then worked doing animation for TV at the Indianapolis Motor Speedway. Eventually, I was ready to leave Indiana and go to California.

I was very fortunate to first have the opportunity to travel around the world for a year with no plan before moving to San Francisco. I flew to Lima, Peru on a one-way ticket and spent the next 12 months staying in a handful of cities in South America, Eastern Europe, Turkey, India, and Thailand. If I got to a place I liked, I got an apartment and stayed for a month.

Traveling, being curious about the world, and meeting many different people goes quite well with creating art and just living life in general.

I did eventually land in San Francisco, where I’ve spent the last 10 years working on animation, VFX, and creative technology projects at Google, Intel, and currently the ad agency Goodby, Silverstein & Partners."

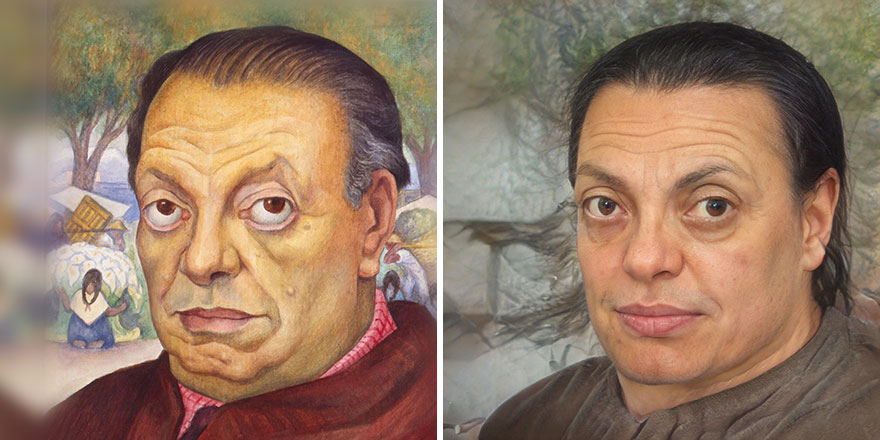

Diego Rivera

George Washington

Nathan explains how he creates these edits: "It’s a very iterative and explorative process. In the most simple terms, a face is used as input for the software and the software generates new faces based on the input. I have examples where I am creating 'real' versions of painted or cartoon people and also cartoon versions of real people.

More specifically, to create real people, the central part of the process uses machine learning to find a human who has a similar shape to the faces in an AI network created by Nvidia. This network is created with a GAN (a kind of machine learning framework, this GAN is called StyleGAN) and trained on a dataset of 70,000 human faces (called FFHQ). The AI learns how to generalize what a human face looks like and can then generate new human faces that don’t actually exist but look very realistic.

Because the network is trained on images of real people, it’s very good at creating more real people, even when you give it an input that is just a drawing or painting.

I have other examples using the same tool (StyleGAN) to create new images based on 400-year-old woodcuts of Aesop’s Fables illustrations, Beeple’s library of everydays, and even custom datasets to make music videos for musicians like Qrion and Hiatus. A lot of these are on my site here."

Frida Kahlo

It’s so sexist that it took out her very real unibrow which she was proud to wear

"I have a core set of tools that I use from my background in animation and VFX (Photoshop, After Effects, C4D, Maya, Nuke) but the most interesting tools usually come from Github repos released by academics and machine learning researchers. These are often run by editing Python code on a Linux machine which controls a machine learning library like Tensorflow or PyTorch.

In fact, almost everything about these face images comes directly as output from the Python code. I’ve been particularly interested in exploring Nvidia’s StyleGAN and a StyleGAN encoder called pixel2style2pixel."

Nathan says that the actual images take minutes to create; however, he had to go a long way to learn everything: "All of the learning and background I needed to get to this point has been a couple years of exploration and trial and error. I even attended a conference at MIT called GANocracy back in 2019.

I’ve built an art player, for example, that can generate completely new, never-ending, totally novel art in real time. Frames are made on the fly! However, training the model and writing the code for the player was weeks of work and processing time."

Rembrandt

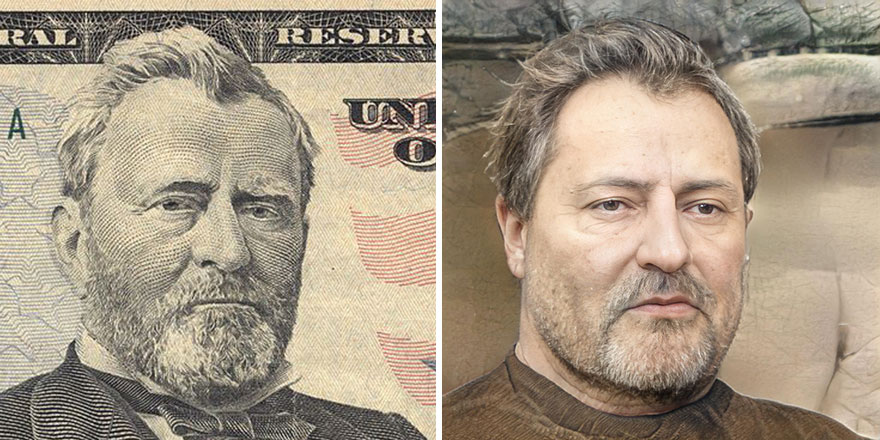

Ulysses S. Grant

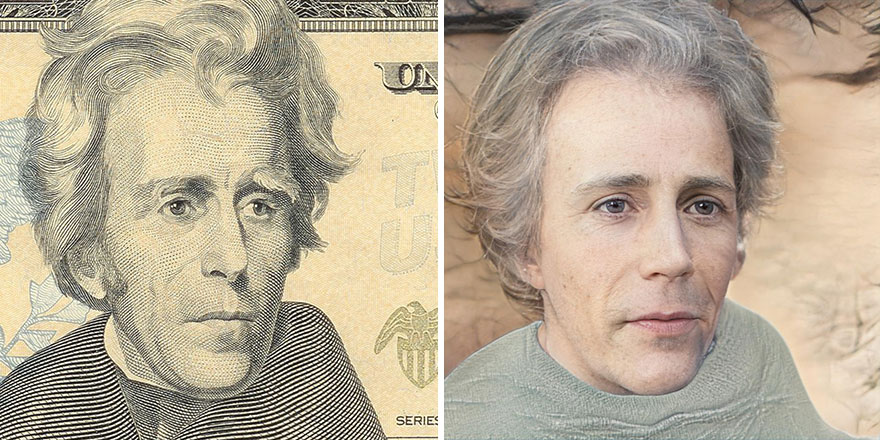

The artist shared how he chooses which people or characters to recreate: "I pick characters that I love (Miguel from Coco, for example) or historic people that we don’t actually have photographs of. Some faces don’t work as well as others, but it’s really exciting when there is a compelling result! A lot of this is trial and error and me just publicly sharing the tests that I make as I go.

For example, I would love to see what Mona Lisa might look like and now I’ve got a realistic face that might be like her. I’m not saying that it is Mona Lisa, but it’s a possibility.

When people see my edits, they say everything from 'amazing!' to 'creepy!' to 'that looks like my cousin!' They seem to be getting a good amount of attention, so at the very least, they’re interesting!"

Lil Miquela

Andrew Jackson

"Overall, I think the space of generative and AI art is a fascinating and very deep well to explore. I’d certainly encourage readers that are interested to try it out! The technical hurdles can seem daunting, but with a little bit of background, you can really Google your way through a lot of this.

It’s also the sort of thing where academics and researchers present these technologies in a very academic or complicated-sounding way. Understanding a paper called 'A Style-Based Generator Architecture for Generative Adversarial Networks' can seem daunting. However, seeing imagery created by artists with the same technology can be very inspiring!

I’d highly encourage readers to check out the work of Memo Akten, Scott Eaton, Mario Klingemann, Refik Anandol, Helena Sarin, and Ben Snell to name just a few. These are the artists that have been foundational in my own interest in exploring AI and machine learning."

Diego Rivera

Mr. Incredible From The Incredibles

What do you think of these edits? Tell us in the comments and vote for your favorite ones! Don't forget to go show some love to the artist on his social media accounts.

If you want to see more posts similar to this one, click here or here!

Rembrandt

Rembrandt

Rembrandt

Dash From The Incredibles

To be fair, the picture on the left is probably fan art that doesn't look very much like the actual thing in Incredibles 2...

Russell From Up

The cartoon ones were creepy because the proportions were still exaggerated despite everything else being made realistic. It’s like if you took a smiley face emoji and *just* made the eyes realistic human eyes. Or like annoying orange. It just looks off ¯\_(ツ)_/¯

Darn only a couple of images were realistic. AI messed up about the hat. 🤔

I mean, I get that it's an AI and all, but really, most of them don't work at all. Some of them are downright scary.

Well, this was disappointing. Harshly. The artist seems to have reinterpreted the faces without consistent attention to the details actually there, and according to personal preference, rather than simply interpreting them from one medium to another. Well-known details are ignored, like Mona Lisa's lack of eyebrows and Kahlo's uni-brow; facial structure is changed like with Franklin's and Rivera's chins and Rembrandt's mouth; and what is with the hair? Why does it all look like it's a wig formed from hair pulled from a drain and dried? Is there some mystery concerning parts and era-specific hairstyles?

The cartoon ones were creepy because the proportions were still exaggerated despite everything else being made realistic. It’s like if you took a smiley face emoji and *just* made the eyes realistic human eyes. Or like annoying orange. It just looks off ¯\_(ツ)_/¯

Darn only a couple of images were realistic. AI messed up about the hat. 🤔

I mean, I get that it's an AI and all, but really, most of them don't work at all. Some of them are downright scary.

Well, this was disappointing. Harshly. The artist seems to have reinterpreted the faces without consistent attention to the details actually there, and according to personal preference, rather than simply interpreting them from one medium to another. Well-known details are ignored, like Mona Lisa's lack of eyebrows and Kahlo's uni-brow; facial structure is changed like with Franklin's and Rivera's chins and Rembrandt's mouth; and what is with the hair? Why does it all look like it's a wig formed from hair pulled from a drain and dried? Is there some mystery concerning parts and era-specific hairstyles?